The Devil is in the Details

This is the first in a series of posts I intend to write about some of the nuances of clinical research and statistical techniques. The introduction is long winded, but I think it’s useful to try and explain why I think this is so important.

Our current approach to clinical research has fundamental flaws

In 2014 clinical research forms the backbone of our medical practice. Unfortunately there is an increasing feeling that our systems of research have not been serving us as well as we think. Amongst the many excellent talks that came out of smaccGOLD in 2014 were a number of speakers highlighting the limitations and conflicts of interest that are a widespread problem in the conduct and publication of clinical trials.

Journals are often motivated to publish for reasons other than the quality of the paper, the influence of industry is often perverse and not obviously apparent, and the safeguards meant to prevent fraudulent publication have proven to be fallible. This has lead to important journals publishing inaccurate and even fabricated data, potentially causing irreconcilable harm to patients.

One of the potential solutions is open access publication. All trials conducted should be published and made available for free, including the publication of trial data. This is the motivation behind Rob McSweeney’s exciting new online journal Critical Care Horizons.

There are an increasing number of open access journals in many fields that appear to follow this principal. It is important to understand the financial motivation behind many of these journals. Unlike Critical Care Horizons they often charge authors for publication. Authors maybe motivated by the ease of publication and the journal is publishing simply because the fee has been paid. This means the quality is often not of the same standard as established journals.

The main concern about open access publication is the removal of the peer review process. Whilst in many ways peer review is a flawed technique, it is the accepted method for ensuring only research of sufficient quality makes it to clinicians for wide spread appraisal and potentially to change practice.

Critics would argue that peer review has failed on a number of occasions and a more transparent process is required. Currently no one is offering an ideal solution.

Both sides of this argument are best summarised by Professor Simon Finfer’s fantastic talk on The Dark Side of Research given to the Sydney Intensive Care Network earlier this year.

What can we do as medical professionals?

What ever the source and format of clinical research, the only way we can prevent ourselves from being mislead or lied to is by being savvy consumers. This means we require an understanding of increasingly complex methodological and statistical techniques. To date our medical education and training has not provided a good foundation for acquiring these skills.

Public health, statistics and epidemiologically classes are, in my opinion, amongst the most tedious and often poorly attended at university (at least when I was a student.)

Critical care colleges test a basic understanding of statistical techniques in primary or fellowship exams, but knowing the difference between sensitivity and specificity is no longer sufficient in a world were regression models are king.

All the colleges require conduct of a research project or similar. Unfortunately the format for these projects often renders them little more than a barrier to completing training without ensuring they provide the skills to assess and interpret publications to guide practice. The recent move towards accepting approved courses from recognized universities are hopefully a step in the right direction.

What does FOAM have to offer?

FOAM has filled the gap in medical education when more established formats have failed to move quickly enough to assimilate new ideas into current practice. In terms of clinical research, many blogs function as online journal clubs publishing breakdown and interpretations of major trials. Several websites, such as StEmlyns, have talked about different statistical techniques, although this is not an easy subject to write about. It is extremely dry and the benefits of a more detailed understanding are not always apparent.

Whilst I am by no means an expert in clinical research, I have a particular interest in trials and their design. My aim is to write a series of posts about some of the finer details of research and statistical techniques. The hope is that this will make it easier to understand the language and techniques used in publications. My general approach will be to concentrate on key practical points, keep posts short(ish) and where possible use well known trials to highlight these ideas.

With that in mind I wanted to make my first post about CONSORT (Consolidated Standards of Reporting Trials).

CONSORT Statement and the CONSORT Flow Diagram

CONSORT is a group of researchers that published a 25 point check-list in 2010. This highlighted the key points that should be included in the write up of a clinical trial. They are an excellent framework for structuring and writing a paper and I would encourage anyone in the process of conducting clinical research to use them.

Occasionally they are presented as a way to critique published papers. This is generally a flawed idea as you end up assessing the write up rather than the experiment.

The same group also produced a similar statement for systematic reviews called PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses). Both checklists detail what should be in a write up and where it is appropriately located.

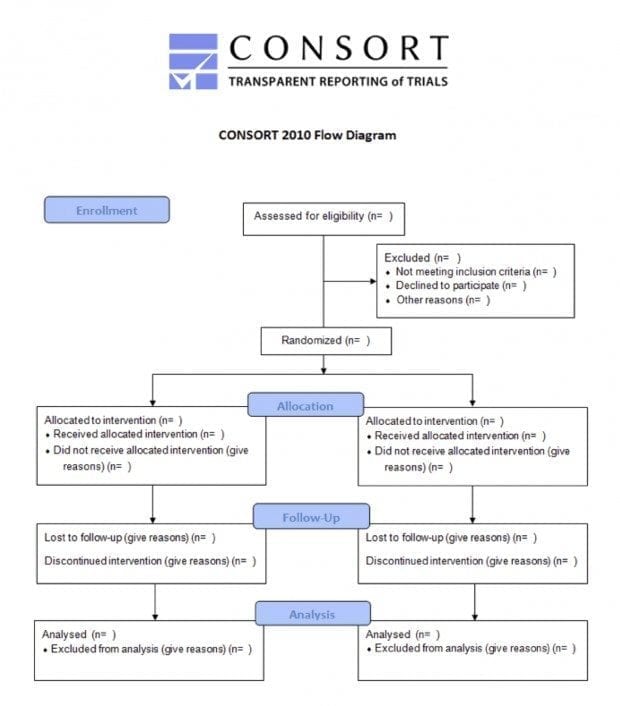

Part of this checklist is a flow diagram. The CONSORT Flow Diagram is a simple box diagram that details the passage of participants (or papers in the case of PRISMA) through the trial from initial screening, all the way to follow up. Every published article should produce one of these flow diagrams; they will likely be located somewhere within the published material and are a useful source of information when assessing a paper.

This diagram details patient numbers at each stage of the process, allowing assessment of components of the conduct of the trial such as those receiving intended allocation, those lost to follow up and the numbers included in the final analysis. In it’s best form the flow diagram will include an explanation as to why patient numbers changed at each stage such as reasons for patient exclusion after screening.

One of the main benefits is that it allows readers to assess external validity in more detail. One of the fundamental aspects of external validity is the inclusion/exclusion criteria. The problem is that it can often be hard to understand the effect that they have on the size and make up of the final trial population, and how relevant the trial patients are to the broader population. An excellent example of the benefits of the flow diagram can be found in the DECRA study.

DECRA (Decompressive Craniectomy in Diffuse Traumatic Brain Injury)

DECRA was published in NEJM in 2011 and is an exceptional study. The fact that they were able to conduct an RCT on such an invasive procedure is impressive enough, but it was also well conducted with robust methodology.

In the DECRA trial researchers assessed the difference between a conservative approach to craniotomy with a very aggressive one in the management of elevated ICP (>20mmHg sustained for more than 15 minutes) in the first 72 hours following closed traumatic brain injury.

The primary outcome measure was revised to disability level at 6 months as defined by the Extended Glasgow Outcome Score. Their findings were in favour of a conservative approach. 70% in the surgical group had unfavourable outcomes compared with 51% in the conservative group. This has lead to many clinicians being more cautious with the use of this surgical procedure than they would have been before its publication.

There are several remaining controversies with DECRA regarding surgical technique, timing of intervention, trigger of ICP and how these findings should be extrapolated into clinical practice.

One of the concerns was how applicable the results are to all patients with significant brain injury. This is a test of the external validity. Inclusion and exclusion criteria were as follows.

Inclusion Criteria:

- Age 15-59y

- Suffering severe, non penetrating traumatic brain injury defined as GCS 3-8 or Marshall class III on CT

Exclusion Criteria:

- Not suitable for full active treatment

- Had dilated, un-reactive pupils

- Mass lesions (unless too small to require surgery)

- Spinal cord injury

- Cardiac arrest at the scene

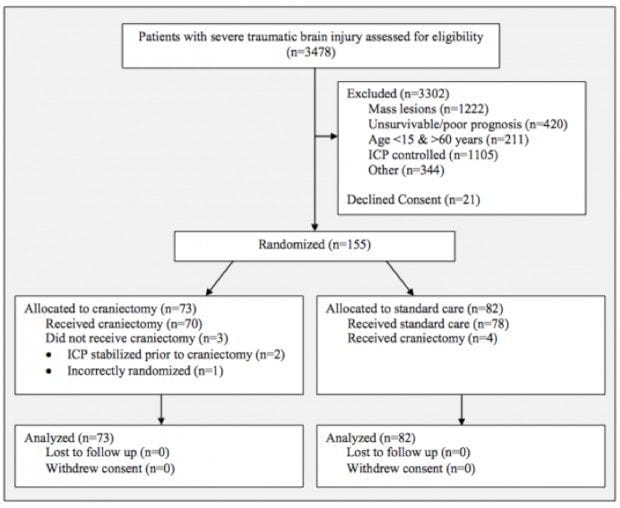

The write up states that of the 3478 patients assessed (likely to meet inclusion criteria) only 155 patients were enrolled in the study. This already suggests findings are only directly applicable too a small percentage of severe head injuries. However this doesn’t give much of an idea as to why patients were excluded, e.g. refused consent, age, spinal injury etc.

In the supplement, made freely available online, the authors included a CONSORT Flow Diagram.

This provides a lot of information about the patients screened and those included in the study, in a simple format.

The first point to note is that only 21 patients’ families declined consent. For such an invasive procedure, in such sick patients, this is a staggering achievement. I would love to know what information was provided to families as getting patients to consent to comparatively simple treatments for a study can often be challenging.

The second point is that the majority of patients were either excluded on the basis of mass lesions (sub or extradural haematoma) or their ICP was controlled with medical therapy alone. This means that the findings are harder to extrapolate to patients who have a craniectomy for removal of a mass lesion or patients that have sustained elevated ICPs after the first 72 hour period (although this isn’t particularly common). It does not mean that the study findings are irrelevant to these patients, just that we have to think more carefully about how they should be applied.

It is also worth noting that the trial team did an excellent job of maintaining study protocol with only 7 of the 155 patients receiving the incorrect treatment, in very difficult circumstances. All patients enrolled in the study completed it with no losses to follow up.

Summary

DECRA demonstrated that not every patient with a raised ICP benefits from this form of craniectomy, and those that receive it require ongoing medical management. The effects of DECRA have been a more holistic approach to the management of ICP in the severely brain injured patient. Rather than simply lifting the lid, we now concentrate on optimal medical therapy and a more detailed consideration as to why the patient may have an elevated ICP. This can only be of benefit to our patients.

The CONSORT diagram demonstrates which patients we should be more cautious about blindly applying the findings of DECRA to. Hopefully RESCUE ICP will answer some of the remaining questions more clearly when it finally meets its recruitment targets.

CONSORT diagrams are increasingly included as part of the main publication but they are often found in the supplemental material. I would encourage people to look for them in any publication of significance as they highlight a lot of information in a very accessible format.

The CONSORT and PRISMA checklists are an excellent resource and freely available online.

References

- CONSORT Flow Diagram Generator.[ Cited 5 Dec 2014.] Available from URL: https://depts.washington.edu/hrtk/CSD/

- CONSORT Statement.[Cited 5 Dec 2014.] Available from URL: http://www.consort-statement.org

- Cooper DJ, Rosenfeld JV, Murray L, Arabi YM, Davies AR, D’Urso P, Kossmann T, Ponsford J, Seppelt I, Reilly P, Wolfe R; DECRA Trial Investigators; Australian and New Zealand Intensive Care Society Clinical Trials Group. Decompressive craniectomy in diffuse traumatic brain injury. N Engl J Med. 2011 Apr 21;364(16):1493-502. doi: 10.1056/NEJMoa1102077. Epub 2011 Mar 25. Erratum in: N Engl J Med. 2011 Nov 24;365(21):2040. PubMed PMID: 21434843. [Free Full Text] [Supplementary material]

- Finfer S. The light and dark side of research and publication. Intensive Care Network. [Cited 5 Dec 2014.] Available from URL: http://intensivecarenetwork.com/finfer-the-dark-side-of-research/

- PRISMA Statement.[Cited 5 Dec 2014.] Available from URL: http://www.prisma-statement.org

Critical Care

Compendium